About

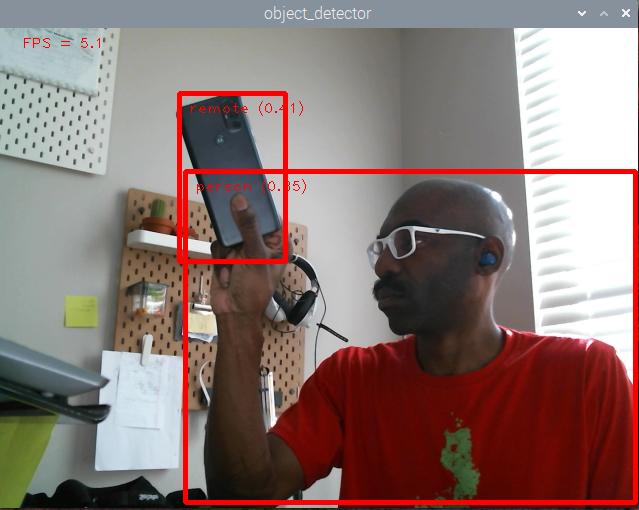

Object Detection with OpenCV on Raspberry Pi, 2022

Object Detection with OpenCV on Raspberry Pi, 2022

I'm a creative technologist with 15+ years of experience in web development. Self-taught, I lead with curiosity, a bias towards action, and a need to get my hands dirty learning by doing.

The result is a broad functional knowledge base, a couple pillars of domain expertise, and proficiency with AI tools to go deep on a per-project basis.

As a former board member at 934 Gallery, I understand cultural institutions from the inside: the budgets, the missions, the balance between public experience and operational reality. I've built interactive exhibits, originated youth programs, and managed the kind of cross-functional work that small teams require.

Projects

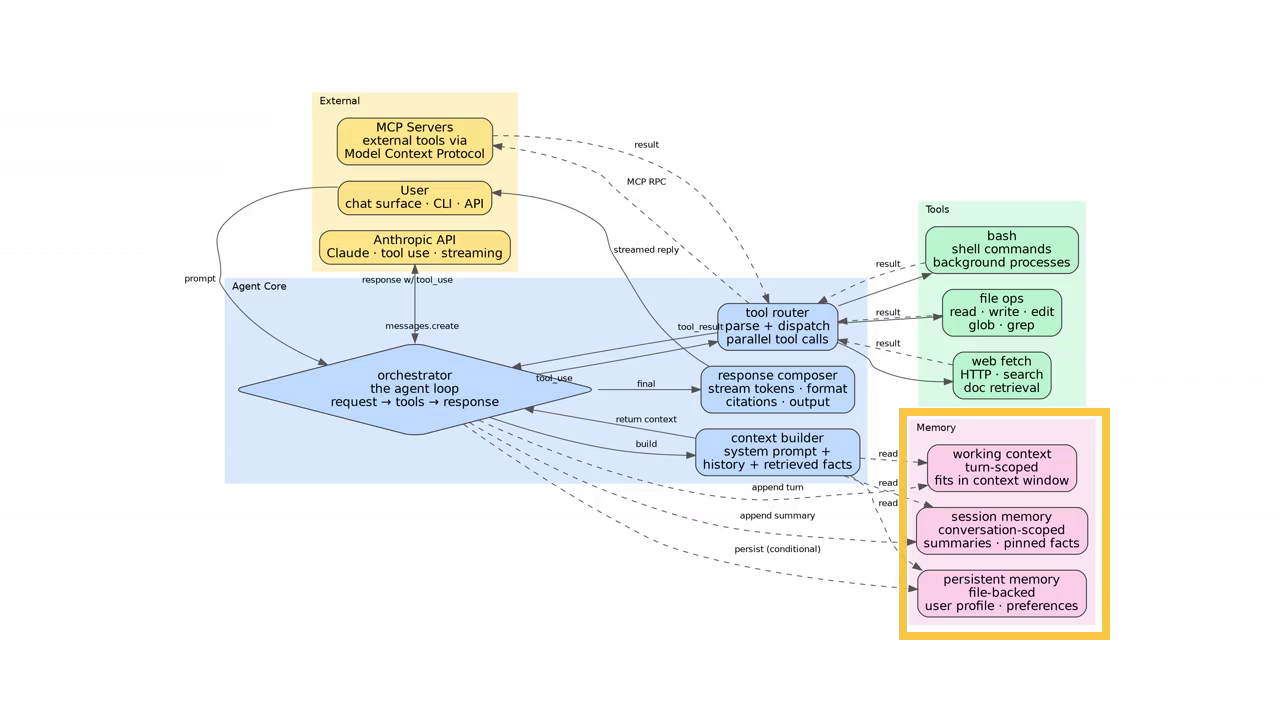

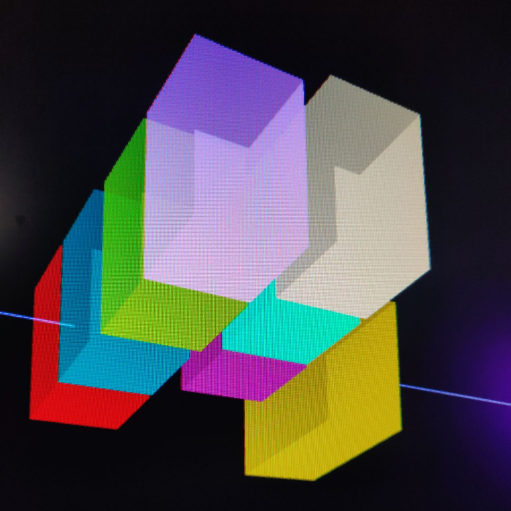

diagram-tour

Claude Code SkillA 3-minute tour of a generic Claude-style AI agent architecture, generated by diagram-tour. Click to view the repo on GitHub.

A Claude Code skill that turns any codebase into a narrated architecture-tour video. "Explain this codebase" yields a ~6 minute MP4 walking through the project cluster by cluster, with arrows and highlights synced to the narration. It generalizes beyond code; the same pipeline works on any complex system or process you can describe as a directed graph.

How it works: A four-stage pipeline; analyze the repo, generate a Graphviz .dot diagram, write a markdown narration script, render with Piper TTS and a deterministic ffmpeg overlay chain. Each expensive step is gated by a confirmation prompt so the diagram and narration can be refined in plain English before committing to a render. A per-sentence content-addressable voice cache keeps iteration fast.

I built diagram-tour because working with agents pushes my mental model of a project ahead of my ability to review what gets produced; a narrated tour forces a cohesive top-down explanation that I (and collaborators) can replay anytime. Open-sourced under Central Tower Labs.

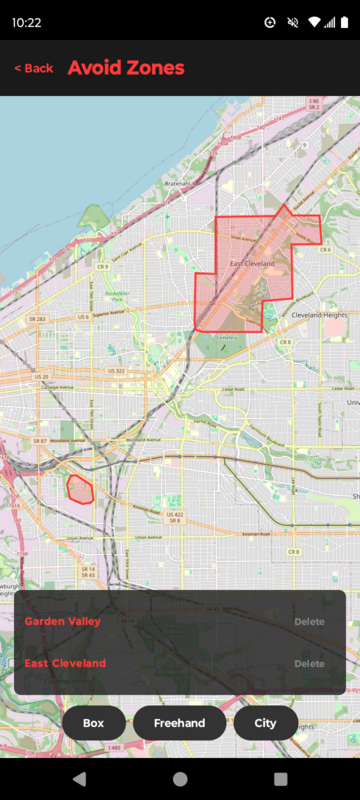

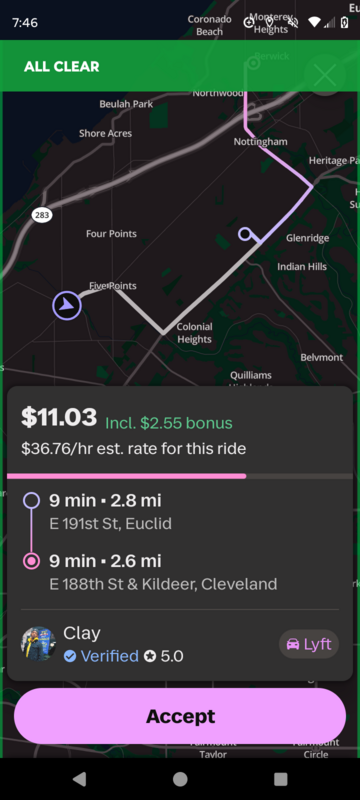

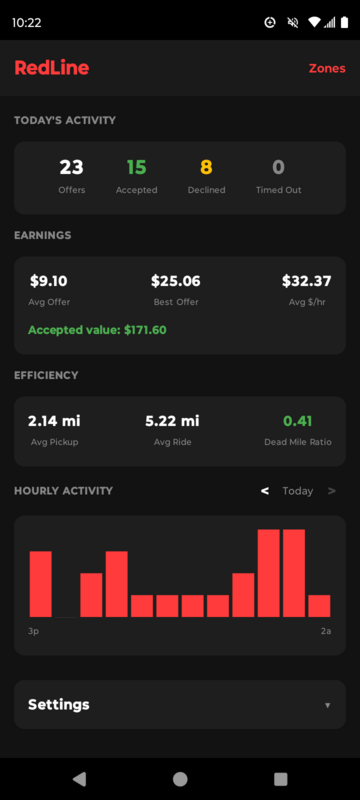

RedLine

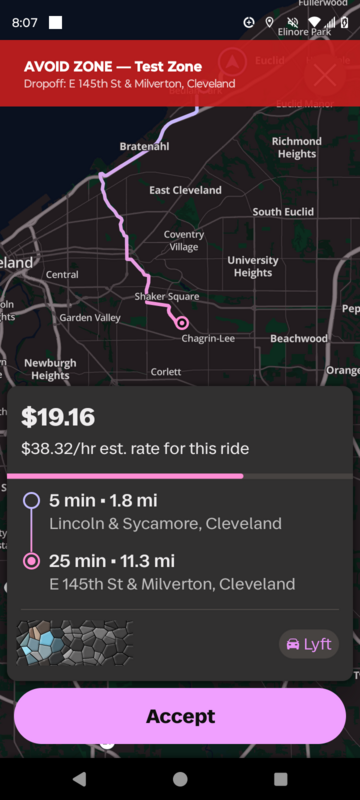

Android AppRedLine is a safety app for Lyft drivers currently in closed beta.

The story: I was assaulted by a passenger on 3/13/26. The cops said it was one of the most dangerous neighborhoods in America. After filing a report — the police wouldn't even enter to check it out — the very next ride Lyft matched me with would have taken me back to the very same place. So I made this app to put driver safety first.

Product: Drivers mark zones to avoid on the map — freehand, by box, or by whole-city boundary. Every incoming ride offer is checked against those zones in real time, with a clear alert when pickup or dropoff falls in a no-go area.

Get Early Access

Fidget Feed

Android AppThe problem: The average person picks up their phone 96 times a day. Most screen-time solutions fight human behavior head-on; blocking apps and hoping willpower handles the rest. After quitting Instagram cold turkey, I found myself picking up my phone throughout the day and just staring at the empty space the icon used to be. Just staring at the phone with nothing to do. That was the seed of the Fidget Feed user experience; a tappable, swipable UI playground with haptic feedback. An attempt to give my brain the dopamine it craved without all the distraction of algorithmic feeds.

Product: Using Sensor Tower for market analysis and social media communities for user research, I validated the concept and developed a distinct name and design language. Fidget Feed is a two-word domain that describes the product in the simplest terms.

Design: Instead of stopping users dead in their tracks, I designed an alternate feed of low-cognitive-load fidget widgets. Figma explorations took the feed and onboarding through multiple iterations. The breakthrough: the best way to communicate the experience is to put live interactive widgets front and center in onboarding; better shown than described.

Tech: Built natively in Android Studio with coding agents as a core part of the workflow. Starting with GitHub Copilot Agent taught me the fundamentals of context engineering. Moving to Claude Code with the Opus 4.5 release was a step change in agent capability.

Where it is now: Entering beta, the final step before applying for the Google Play Store.

Clicky Wheel: a satisfying rotary fidget with haptic clicks.

Digital Garage + 934 Gallery

Interactive ArtIn 2022, I joined the board of 934 Gallery, a beloved art gallery in the Milo Grogan neighborhood of Columbus, Ohio. That summer, while volunteering for Mural Fest, the gallery's annual fundraiser, Liz Martin, then board president, accepted my proposal to reclaim an unused garage space and turn it into a digital-focused art space. I built exhibits using vision tracking systems, projection mapping with Touchdesigner, and hand-made LED panel installations. Visitors walk into a room and the art responds to their movement.

That first collaboration led to a Columbus City Pulse feature and a Columbus Arts Council grant to create the 934 Digital Garage Youth Summer Design Thinking Camp, a free five-week STEM camp for 12 Columbus City Schools high schoolers. Students created 2D animations in Pencil and 3D models in Blender, then used Touchdesigner to build an immersive projection-based art piece; their final experience design project, unveiled at an opening reception during Mural Fest. Every student kept their tech kit at the end.

The following year, I partnered with COSI to develop a four-week Systems Thinking STEAM module for their Platform Program. Students learned the four modes of systems thinking; making distinctions, seeing cause and effect, understanding parts and wholes, and taking perspectives, through hands-on art making with a different guest creator each week: Allan Pichardo (software developer and digital musician), Krista Faist (art educator), Jayce (AI artist and workshop facilitator), and Dom Deshawn (Columbus-based performer).

Untitled: In the Garage east room, visitors enter a cold dark space. The vision system tracks the movement of their right hand only.

Touchdesigner used to map a volume of virtual space to the real space of the Garage.

Student workstations set up before a session.

Apophis Countdown

Web AppA personal project with the intention of turning public attention heavenward. 99942 Apophis is an asteroid roughly the size of the Eiffel Tower on course to pass between the Earth and Moon on April 13, 2029. Discovered in 2004, it went from a possible collision threat to confirmed safe, and passed back into obscurity. I suspect for a day or two it will be one of the most discussed stories on the planet. This project is a long-play for that moment of attention.

What I built: An educational countdown site with a 3D interactive orbital viewer, a public REST API for developers, and a content strategy built around structured data and SEO.

memory.audio

Web AppAs I dove into the AI state of the art in early 2025, I built memory.audio to learn how to leverage LLMs to deliver real value to users. The app takes a single plain-English prompt and generates a 4–5 minute audio lesson on practically any subject.

Building in public on X, the app drew about a hundred users who provided feedback. A second iteration added rudimentary cognitive assessments; memory baselines users could track over time. A third added two-party conversation-style podcasts to complement the rote-repetition lessons. At that point, I realized I was recreating Google NotebookLM and decided to retire the project. The cognitive assessment angle still has legs; I expect to revisit it.

Tech: What started as scripts on my laptop became a fully deployed web app powered by OpenAI's completion API and Google Cloud Text-to-Speech. The project has since been taken offline.

Technologies

Agentic Engineering

I build with coding agents as a core part of my workflow; not as a crutch, but as a multiplier that lets me move faster across unfamiliar stacks.

Languages

- JavaScript

- Python

- Kotlin

- HTML / CSS

Cloud & Infrastructure

- AWS (Lambda, S3)

- DigitalOcean

- Azure

- Linux / Nginx

Tools & Frameworks

- Node.js

- Android SDK

- Three.js

- OpenAI / GCP APIs

Hardware & Physical

- Computer Vision

- Touchdesigner

- Projection Mapping

- LED Panel Systems